Outline

- Introduction

- What is a landing page?

- Landing page vs. home page

- How do landing pages actually work?

- 11 steps to building a landing page

- Landing page optimization tips

- Landing page examples

Subscribe

More like this:

You can't take a 'build it and they will convert' approach to landing pages. These lead drivers can be powerful, but only if you are optimizing them for conversions. In this blog, we'll explore how landing pages work, optimization tips, examples, and steps to creating a landing page that actually works.

What is a landing page?

If you’re not sure exactly what a landing page is, you’ve definitely seen a lot of them.

A landing page (aka lead capture page) is a specific page on a website where a visitor “lands” to buy a product/service or to receive a resource (e.g. ebook, webinar invitation) in exchange for their contact information (e.g. email address).

The purpose of a landing page is to incentivize a visitor to take a single action - typically to fill out a custom signup form. Landing pages will seldom include navigation buttons so visitors are less likely to click out of the page and more likely to take the desired action.

Key landing page statistics

The average landing page conversion rate across industries is 2.35%.

48% of marketers build a new landing page for each campaign.

Companies with 31 to 40 landing pages get seven times more leads than those with one to five landing pages.

Relevant embedded videos can increase landing page conversions by 86%.

Landing page vs. home page

A landing page is different to a homepage (i.e. the front page of a website) because the latter typically includes more navigation buttons and links in the menu tab, footer, sidebar, etc. to give visitors multiple options.

Also, call-to-action (CTA) prompts are broader on homepages (e.g. “Find out more.”) compared to landing pages (e.g. “Download this ebook” or “Join our newsletter”), because the landing page has one goal: to convince the visitor to take the desired action.

Another differentiator is that landing pages are typically tailored to a specific audience/visitor based on their interests and behaviors. For example, a newsletter subscriber may read about an upcoming webinar in a company’s newsletter and click on the link. They would then be taken directly to a landing page designed to encourage the visitor to register to the webinar by leaving their details, such as their name, email, job title, industry, etc. The company can then use this data to target the user in the future.

How do landing pages actually work?

We’ve established that landing pages are a good idea…if you can drive traffic to them. So, how do you do that? There are a few different ways:

Email marketing: You can put a link to a landing page in your emails, e.g. in your newsletter to convert subscribers.

Social media: Post landing page links across your social network (e.g. Facebook, Instagram, Twitter).

Content marketing: You may embed landing page links within a blog post.

SEO: You can (read: should) optimize your landing pages for SEO to boost their discoverability on search engines like Google. We’ll look at this a little more later.

Paid advertising: Whether social, search, native content, or display, paid advertising is a great way to drive traffic and conversions. Just make sure to get specific with your targeting to avoid wastage.

11 steps to building a landing page

Once you've decided on a landing page builder, it’s time to get creative. Below are 11 simple steps to building a landing page, from beginning to end:

Select a landing page template

Give your landing page a name

Add your content

Include visuals (images, videos)

Choose a domain name

Add links and CTA copy

Optimize it for SEO

Hit publish

Link to it from multiple sources/channels

Track performance

A/B test

Landing page optimization tips

A typical landing page bounce rate benchmark in 60-90%. This means that if 10 people land on your landing page, six to nine of them will leave the page without taking the desired action.

Luckily, there are tricks to make your landing pages more appealing, more persuasive, and more likely to convert.

1. Keep it simple

The purpose of a landing page is to get a visitor to take one action. Therefore, copy, images, graphics, buttons, etc. that don’t contribute to that goal are just noise. Visual clutter will act as a distraction, so only include what you must.

2. Clear CTA

If a visitor lands on your landing page but is confused about what they should do next, they’ll simply click off it. Ensure your CTA is a clear instruction. The visitor should know, within seconds, what to do and what they will receive in return.

Note: Personalized CTAs convert 202% better than a normal CTA.

3. Strong headlines

There is a temptation to be uber-creative with headlines, but headlines that are not straightforward and punchy will not convert visitors.

4. A/B test copy and images

A/B testing different headlines, body copy, CTA copy, images and colors will enable you to identify which elements perform the best so that you can ensure the page is optimized for conversion. Indeed, 60% of companies believe A/B testing is highly valuable to CRO.

5. Add testimonials

Add customer testimonials to enhance credibility and persuasion. Seeing social proof of your products and services is often what gets undecided customers over the line.

6. Optimize for SEO

If your landing pages are SEO-optimized, people can find them organically. This is great for brand awareness and lead generation.

Ensure your landing page is optimized for the relevant industry-related search terms to appear high in the search engine results pages (SERPs).

A big tip for optimizing a landing page for SEO is to ensure relevant, industry-related keywords are used throughout the page, in your title tag, image tags, and in the metadata (description and title). Specifically, think of your meta title and description as a billboard for your website.

7. Experiment with exit-intent pop-ups

Visitors may abandon your landing pages for many reasons – maybe they simply weren’t interested in your content. An exit-intent pop-up can serve as the last attempt to keep them on the site and get them to convert.

Exit-intent pop-ups work by embedding a short script into software that is triggered when a visitor’s cursor moves outside the browser’s upper page boundary on a desktop, or following a number of actions (e.g. pressing the back button, scroll percentage, etc.) on a mobile.

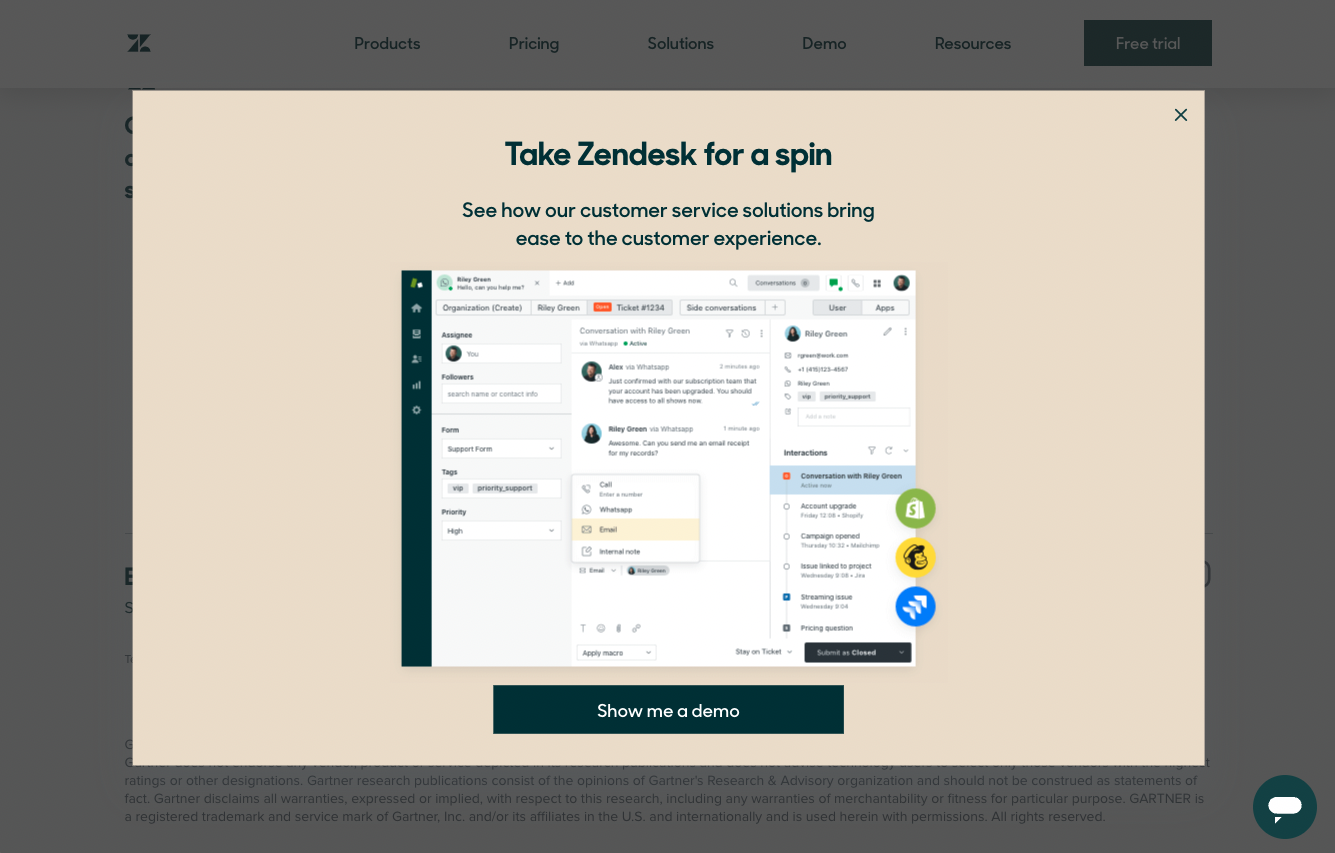

See how Zendesk utilizes an exit-intent pop-up to urge visitors to sign up for a demo.

Landing page examples

Let’s look at a few landing page examples from SaaS, PLG, and eCommerce businesses.

HelloFresh

Meal-kit company HelloFresh builds dedicated landing pages for its promotional offerings. The company Tweeted about its sponsorship of the streaming service Hulu’s show, How I Met Your Father. The Twitter link directs to a landing page with the show’s branding and imagery. There is a ‘Watch now’ button leading to Hulu, as well as a “Claim your meals” button for $120 off. The landing page is visually appealing with an amalgamation of both Hulu’s and HelloFresh’s brand identities, and the CTAs – one to serve each party – are clear. See below.

Dropbox

Dropbox built a landing page for its Dropbox Business plan which offers all the information a visitor needs to know. The heading is clear, the CTA button stands out, and there’s a toggle to see the price of different plans billed monthly vs. billed yearly. There is also a live chatbot to answer queries. Note: By 2022, 85% of companies are expected to adopt live chat support. See Dropbox’s landing page below.

Expensify

Expense management software company Expensify uses landing pages to capture visitors' contact information, namely their email addresses. Colors are bold, headings are strong, and the CTA is clear. See below.

Calendly

Scheduling platform Calendly built a landing page specifically to target enterprise businesses and marketers. The copy on this landing page is different from that of the homepage – the latter is broader, explaining what Calendly is, whereas the enterprise-tailored landing page tells the visitor what Calendly can do for their business. See below.

Disney Store

Disney Store has landing pages for popular product categories. For example, there is a landing page specifically for Minnie Mouse-themed products to convert visitors who are known to have an interest. See below.

Final word

Now that you have a high-performing landing page, it's time to consider how you will keep these new leads interested and drive them further down the funnel. Check out our blogs on lead nurturing examples, SaaS nurture journeys, and personalizing automated prospecting emails to see how this can be done.

Try Ortto today

Build a better journey.

Product

Pricing

Features

About

Resources