How we built an AI-powered subject line tester that predicts your email open rates before you press send

Outline

- Introduction

- Get the subject line wrong, and your open rates will suffer

- An AI-powered alternative to A/B testing your subject lines and improving open rates

- A powerful, practical use case for AI

More like this:

In 2019 I sent my first long-form newsletter. The subject line was, “I reviewed over 500 emails. Here is what I learned”. The subject line wasn’t clickbait, I really did review 500 emails. I studied everything from subject lines, to content, to buttons, to email design – all to form a view of why the best marketers were achieving double the open, click, and conversion rates of the average.

What stood out to me was the amount of work the best marketers put into their subject lines. They realized having the best design and content didn’t matter if no one opened the email. So they obsessed over subject lines, and it was like their brains had re-wired to naturally write high-performing subject lines.

The rest of the people I studied simply didn’t spend enough time writing their subject lines or failed to learn from past mistakes. Many of them seemed to suffer from “marketing brain”. A phenomenon we all know well. From the moment we push our first key into the keyboard we stop writing like a human and start writing like a marketer. You know what I’m talking about, you have emails like this in your inbox right now – and I assume they’re quickly being archived, ignored, deleted, or unsubscribed.

Get the subject line wrong, and your open rates will suffer

Today A/B testing is the obvious solution. Instead of guessing which subject line will lead to a higher open rate, variants can be tested and the final send optimized towards the best performer.

To put this into perspective. Let’s assume the following:

100,000 subscribers

20% open rate

3% click rate

10% conversion rate

$150 average sale price

With these assumptions, your email will earn $9,000. Increase the open rate by 10% and you’ll make a 50% increase in revenue! Subject lines matter.

But the unfortunate reality is that many of us don’t A/B test our emails as often as we should. This is due to various reasons including lack of time, using tools that make testing too complex, setting up automations we forget about or simply not having the skill set to run a valid test. A/B testing is something everyone wants, and talks about – but in reality, no one does.

An AI-powered alternative to A/B testing your subject lines and improving open rates

What if we didn’t need A/B testing to improve our email open rates? What if I could type in a subject line like, “I reviewed 500 subject lines. Here is what I learned” and know the open rate before I even sent the email?

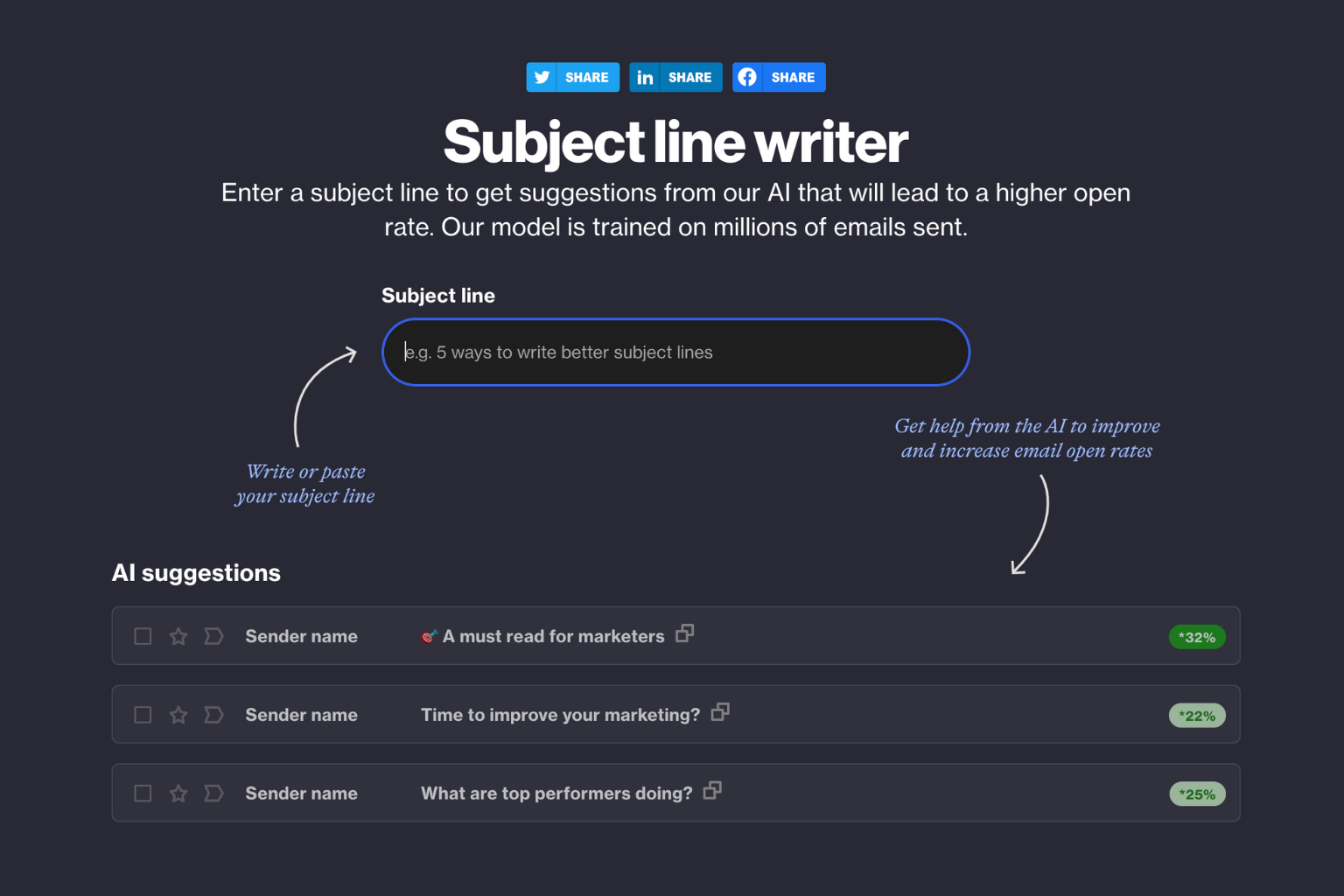

A couple of months ago our team wanted to see if this was possible. So we trained a neural net on every subject line we had ever sent. We taught the AI what subject lines led to a high open rate, and which subject lines led to a low open rate. We weighed our results based on the audience, the engagement of that audience, and a number of other factors such as from name and email address.

Now when we typed a subject line the AI would run this through our neural net, write alternative subject lines and then predict what the open rate would likely be if the email were sent. If the result was higher than the initial subject line we input, we would save the suggestion and then run the experiment again. This makes it sound slow, but this can happen in just a couple of seconds. And the results exceeded our expectations.

Using the subject line AI, we could give the user an accurate open rate for the subject line they input, and suggest alternatives that may lead to a higher open rate. When these subject lines were adopted, the result would only deviate plus or minus a couple of basis points from the prediction. This accuracy meant we could almost guarantee a dramatic open rate increase for all emails sent using the subject line AI.

A powerful, practical use case for AI

So here am I, a mere human, reviewing 500 emails and making suggestions for how email subject lines can be improved. But with AI, it’s the equivalent of reviewing 5 billion subject lines and then jamming all those learnings into a purpose-built “brain” that can write high open rate subject lines every single time.

AI makes what once seemed impossible, possible. And it’s these practical use cases that can lead to meaningful results that excite me about the future of AI and its contribution to the industry as a whole. I fundamentally believe AI will disrupt traditional A/B testing and many other tools that marketers use today.

If you’d like to try the AI subject line writer visit our AI labs, it’s free to use and does not require you to sign up or be a user of Ortto. It’s also a lot of fun! This feature is now standard in Ortto when you create emails. We still do offer A/B testing, but my feeling is this might just be the beginning of the end of A/B testing — and that might not be a bad thing.

You might also like:

The most boring growth strategy ever and why you should do it

Ensure every new lead has the best possible chance of converting into a paying customer, becoming successful and referring others.

A framework for growth that is actually good

Cadence is the heartbeat of your growth. It’s an organizational mindset and framework that is the secret to superior results.

Breaking up with Mark Zuckerberg

Your growth loops will give you more confidence to use paid advertising wisely knowing that anyone that enters your growth loop will have a high chance of converting into a paying customer.

“We were looking for a solution that was really easy to use, didn’t require a tech team, and would have a robust integration with Salesforce so we could trigger sales communications in a smarter way. Nobody else out there has what Ortto has.”

Try Ortto today

Build a better journey.

Product

Pricing

Features

About

Resources